Kubernetes on the cheap

UPDATE: Beginning in June, 2020, GKE will charge for the control plane. This means the design below will jump from $5/month to nearly $80/month.

I was recently inspired by a post claiming it was possible to run kubernetes on Google for $5 per month. One reason I have been shy to jump all in with kubernetes is the cost of running a cluster for development work and later for production.

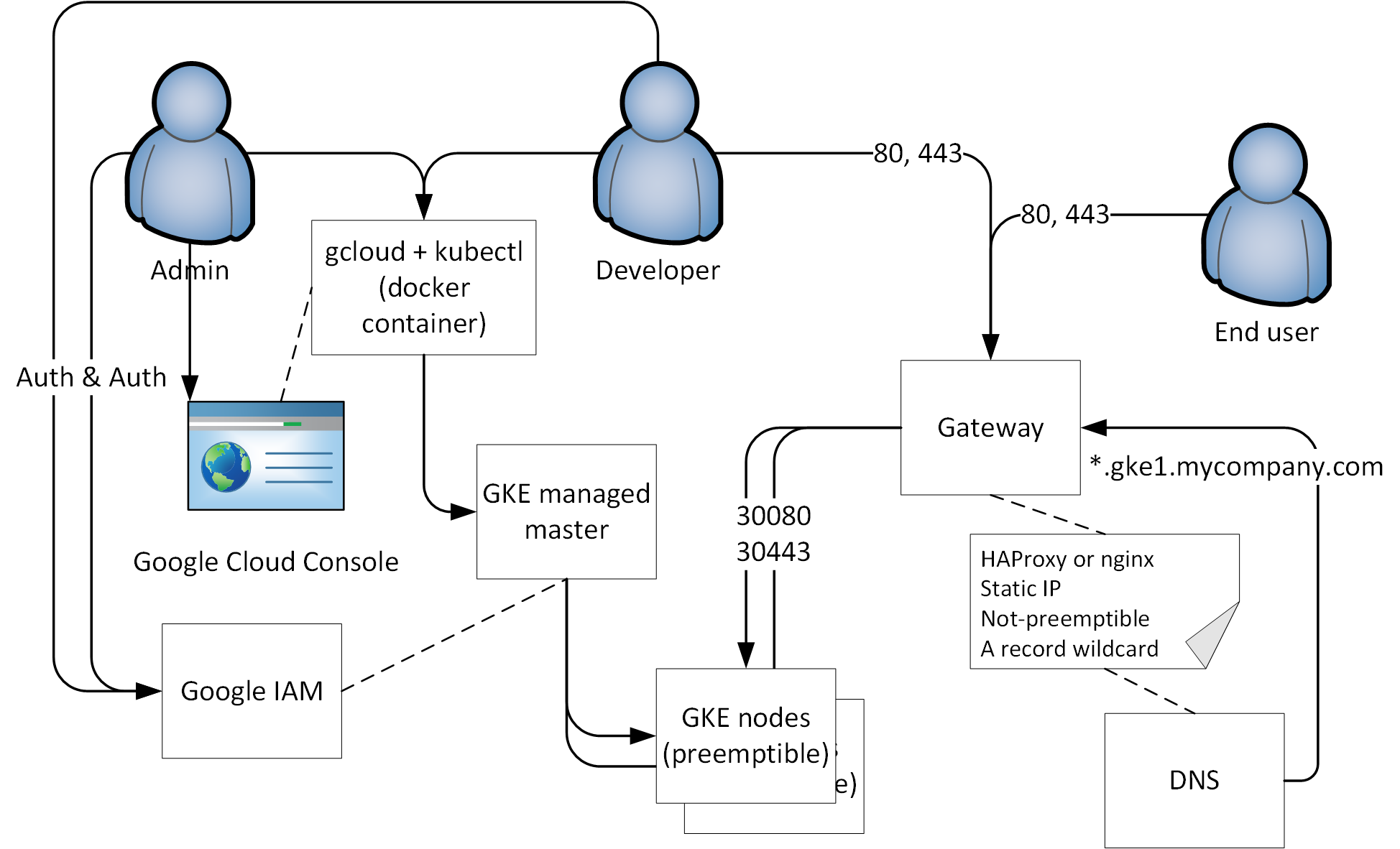

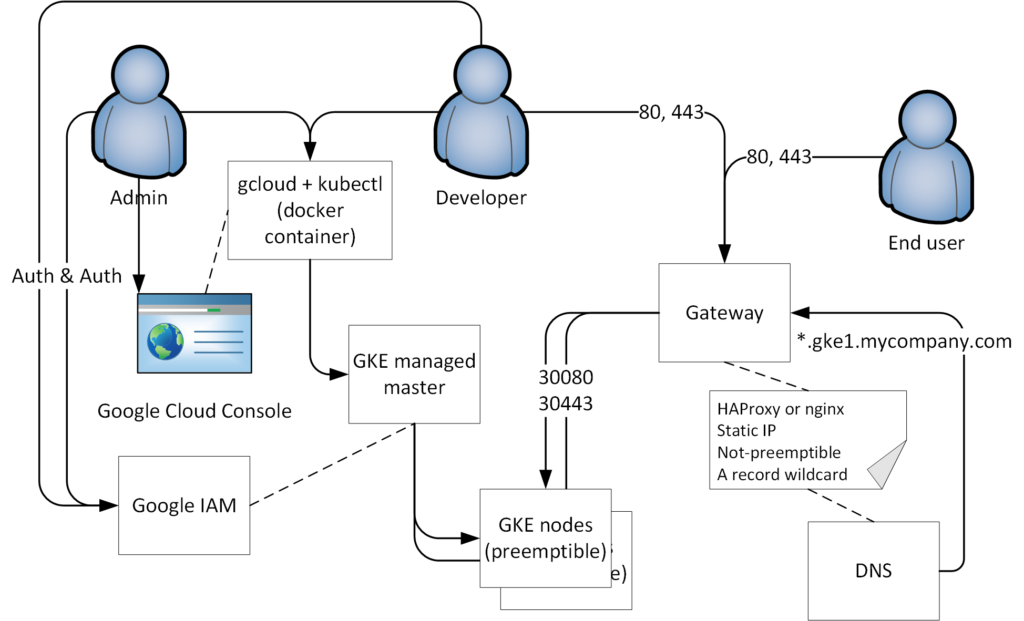

After going through the post above, I realized that I could actually afford double that amount and end up with a very usable cluster for just over $10/month on GKE. The diagram below shows what my cluster looks like.

Here are the steps I took to get there.

- Create a new cluster in GKE and use preemptible nodes

- Use a local docker container (https://hub.docker.com/r/google/cloud-sdk) to run gcloud and kubectl commands (could use cloud shell too)

- Deploy an IngressController (I use nginx)

- Create a Gateway (HAProxy or nginx)

- This would normally be a Load Balancer, but to save money I create a micro instance that can fall under the free plan

- Assign a static IP

- Create wildcard DNS A record pointing to the Gateway

Create a GKE cluster

For this part I really just followed the post I reference above. I love the idea of using preemptible nodes and that saves a ton of money. The cluster always responds exactly was I would expect when a node is terminated. The node pool throws a new one back in.

gcloud and kubectl

I have used the cloud shell, and I think it’s great. I use lots of containers on my local system and always have a terminal open with various tabs, so I choose to run gcloud and kubectl in a container on my local computer. I start the container like this

docker run -ti --entrypoint /bin/bash -v ~/.config/:/root/.config/ -v /var/run/docker.sock:/var/run/docker.sock google/cloud-sdk:latest |

- The first volume should point to a path on your local disk where you are comfortable storing the gcloud config

- The second volume is the path to the Docker daemon socket. This is necessary in order to use gcloud to configure the built in Docker

- client to interact with Google’s container registry https://cloud.google.com/container-registry/, including pushing images into the registry.

After entering the cloud shell, I run the interactive shell, which provides autocomplete and real-time documentation https://cloud.google.com/sdk/docs/interactive-gcloud

gcloud alpha interactive |

The kubectl client is also included in the image and can be configured with the following command

gcloud container clusters get-credentials my-cluster-1 --region=us-central1-a |

Now I can run kubectl commands against my cluster

$ kubectl get node NAME STATUS ROLES AGE VERSION gke-standard-cluster-3-default-pool-37082251-03h4 Ready <none> 1d v1.11.6-gke.3 gke-standard-cluster-3-default-pool-37082251-zgsd Ready <none> 22h v1.11.6-gke.3 |

Deploy an IngressController

An IngressController will make it easy to use name based routing for all workloads deployed in the cluster. The GKE native IngressController creates a new Load Balancer per Ingress. This process takes a long time (up to 10 minutes) and the resulting load balancer has ongoing monthly cost associated with it (around $20/month). Each Load Balancer has a unique IP address, which means that DNS would have to be updated outside the Ingress process for domain based access. More details are here https://cloud.google.com/kubernetes-engine/docs/concepts/ingress

I’m partial to the nginx IngressController, and saving money by not having a Load Balancer per Ingress. This same approach should work with any IngressController. Before the IngressController can be installed, you need to give your user cluster-admin on the cluster (for the creation of the namespace)

kubectl create clusterrolebinding cluster-admin-binding --clusterrole cluster-admin --user $(gcloud config get-value account) |

I then followed the standard instructions here, https://kubernetes.github.io/ingress-nginx/deploy/ for the first command. Instead of the second GKE specific command, which would create a Load Balancer Service, I used the below Service

kind: Service apiVersion: v1 metadata: name: ingress-nginx namespace: ingress-nginx labels: app.kubernetes.io/name: ingress-nginx app.kubernetes.io/part-of: ingress-nginx spec: type: NodePort selector: app.kubernetes.io/name: ingress-nginx app.kubernetes.io/part-of: ingress-nginx ports: - name: http port: 80 nodePort: 30080 - name: https port: 443 nodePort: 30443 |

The above service will bind ports 30080 and 30443 on all Nodes in the cluster and route any traffic to those ports to running instance(s) of the nginx IngressController. You can also scale the number of instances up so that there are instances running on each Node.

kubectl scale deploy nginx-ingress-controller --replicas 2 -n ingress-nginx |

Create a Gateway

As I mention above, the typical approach would be to put a Google Load Balancer in front of the IngressController, at an extra cost of $20 or more per month. This is a great service, but for this development cluster the LB alone would cost double what the cluster will, so I came up with a process to use either HAProxy and nginx on a g1.micro instance, which can fall under the always free tier. I personally use HAProxy, but I tried both, so I include the nginx option below. You only need one, not both.

HAProxy

For the Gateway, I click Create Instance in the Google Compute console. I then use the settings below.

Under the Networking settings, I select Network Interfaces. From there I select the External IP (this defaults to ephemeral) and choose Create an IP. You could also reuse one that you had allocated, but wasn’t in use right then. When you’re done with all these settings, click Create.

Configure the VM to run HAProxy

After the VM boots, I used the browser based SSH connection to run the following commands

sudo apt-get update sudo apt-get install -y haproxy /usr/sbin/haproxy -v |

This installs haproxy and confirms that it is installed by getting the version. Next I edit /etc/haproxy/haproxy.cfg (I use vim), and add the following to that file.

resolvers gcpdns

nameserver dns 169.254.169.254:53

hold valid 1s

listen stats

bind 127.0.0.1:1936

mode http

stats enable

stats uri /

stats realm Haproxy\ Statistics

stats auth stats:stats

frontend gke1-80

bind 0.0.0.0:80

acl is_stats hdr_end(host) -i stats.gke1.company.com

use_backend srv_stats if is_stats

default_backend gke1-80-back

backend gke1-80-back

mode http

balance leastconn

server gke1-3h04 gke-standard-cluster-3-default-pool-37082251-3h04.us-central1-b.c.myrpoj-1a337.internal:30080 check resolvers gcpdns

server gke1-gszd gke-standard-cluster-3-default-pool-37082251-gszd.us-central1-b.c.myrpoj-1a337.internal:30080 check resolvers gcpdns

backend srv_stats

server Local 127.0.0.1:1936 |

The resolvers section makes it possible for the backend to do DNS lookups for the Node hosts IP addresses. The nameserver IP, 169.254.169.254:53, is for all Google Compute internal DNS, such as the hostnames for the GKE nodes. This is necessary because they are preemptible and get new IP addresses when they respawn after being terminated. That is also why we reference them by hostname in the backend section. The frontend routes requests for a stats URL to the stats srv_stats, otherwise it goes to the gke-80-back backend. I can then confirm the configuration file is valid and restart HAProxy with the following commands.

/usr/sbin/haproxy -c -f /etc/haproxy/haproxy.cfg sudo systemctl restart haproxy sudo systemctl status haproxy |

Log files are in /var/log/haproxy.log. You should be able to see a valid http response using curl 🙂

curl localhost |

Nginx

The process for nginx is very similar. Create the VM in the same way, but use the following commands to install and enable nginx

sudo apt-get update sudo apt-get install -y nginx sudo systemctl start nginx sudo systemctl enable nginx sudo systemctl status nginx |

You will need to create a config file, like /etc/nginx/conf.d/gke1.company.com.conf. Unlike HAProxy, realtime DNS resolution can only be accomplished when using one host. The reason for this is that the host has to be a variable, and nginx doesn’t support variables in an upstream block. The following configuration will work

server {

listen 80;

listen [::]:80;

server_name *.gke1.company.com;

location / {

resolver 169.254.169.254;

proxy_set_header Host $host;

set $gke13h04 "http://gke-standard-cluster-3-default-pool-37082251-3h04.us-central1-b.c.myproj-1a337.internal:30080";

proxy_pass $gke13h04;

}

} |

Logs are in /var/log/nginx/.

Wilcard DNS for the Gateway

The last step is to create a wildcard DNS record for the gateway. Usually this is as easy as creating an A record for “*.gke1.company.com” and having it respond with the static IP of the gateway. This will look different for each DNS solution, so I won’t show that here. As long as you chose a static IP, this should never need to change, regardless of how many workloads you run on your cluster.

In the diagram above, you can see that requests follow this path

Request -> DNS -> Gateway -> IngressController -> Workload

Workloads

Workloads need an Ingress that will expect traffic on the chosen wildcard (at any subdomain level). For example, if I had a workload deployed to my cluster as follows

kubectl run kubernetes-bootcamp --image=gcr.io/google-samples/kubernetes-bootcamp:v1 --port=8080 kubectl expose deploy kubernetes-bootcamp --type=ClusterIP --name=kubernetes-bootcamp |

I could use an Ingress like this

apiVersion: extensions/v1beta1 kind: Ingress metadata: name: kubernetes-bootcamp-ingress annotations: nginx.ingress.kubernetes.io/rewrite-target: / spec: rules: - host: kubernetes-bootcamp.gke1.company.com http: paths: - path: / backend: serviceName: kubernetes-bootcamp servicePort: 8080 |

The gateway would be sending all traffic to the IngressController, which would then configure itself to look for the specified host. When the host matched, the traffic would be proxied to the service, kubernetes-bootcamp, on port 8080.

Next steps

One obvious next step would be to complete this implementation for HTTPS traffic, in addition to HTTP. The TLS certificate generation can also be automated using Let’s Encrypt, so that all traffic is secure by default.

Hi Daniel,

I’m very happy to find your post, especially the gateway idea. I have a question about the http path to the instance.

“http://gke-standard-cluster-3-default-pool-37082251-3h04.us-central1-b.c.myproj-1a337.internal:30080”

– What is “b.c”?

– I’m guessing “myproj-1a337” is your project name?

– Is “.internal” a default convention that I’ll have to use as well or is it different and specified by you somewhere?

– I notice you didn’t mention port 53 for the nginx settings. Is that a typo or nginx knows where to look?

Thanks so much again!

To begin with, that URL is autogenerated by Google

b is the last part of the region. I’m not sure what c is.

correct on the project id

.internal means that Google only resolves that URL internal to your project, not to the world

nginx is running in a container, but we have exposed it on port 30080 using a nodeport

Really nice guide, thanks. I now have a cluster to play with

The way I read the pricing change for GKE, you get one zonal cluster for free after June 6, 2020.