Istio Ingress vs. Kubernetes Ingress

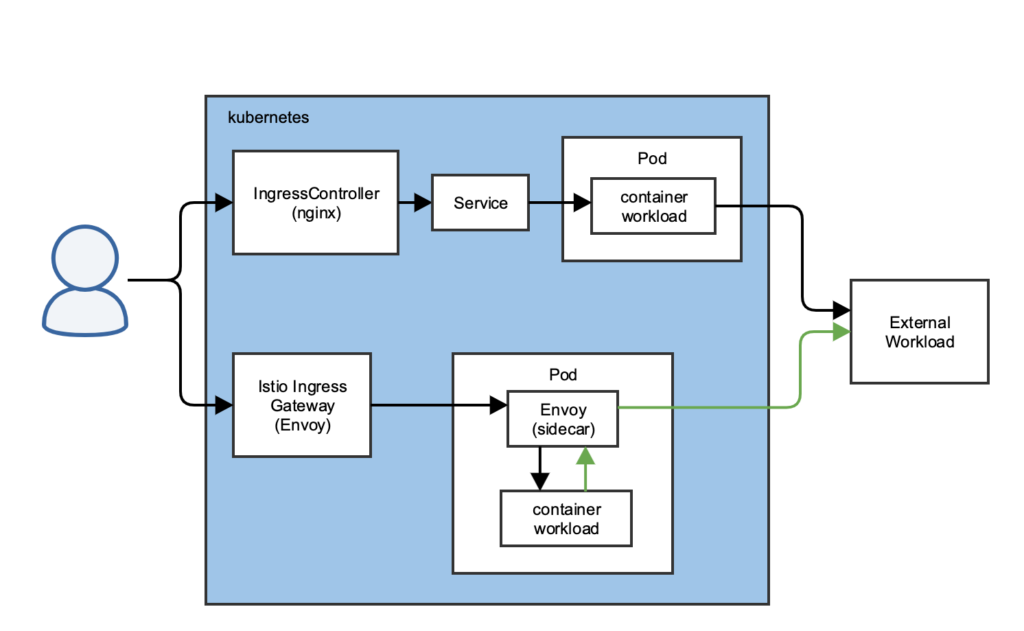

For years I have appreciated the clean and simple way Kubernetes approached Ingress into container workloads. The idea of an IngressController that dynamically reconfigures itself based on the current state of Ingress resources seemed very clean and easy to understand. Istio, on the other hand, felt more confusing, so I set out to correlate what I refer to as “traditional kubernetes ingress” with Istio ingress. The following diagram will help visualize my comments below.

Dynamic Ingress Control

Load Balancer at the Edge

Both approaches are very similar in how they treat traffic at the edge. The demonstrations usually attempt to bypass DNS management and use something like a NodePort for convenience. In general practice, the edge traffic will arrive at a Load Balancer (think ELB or Google Cloud Load Balancing) that will distribute traffic across multiple instances of the IngressController (traditional) or Istio Ingress Gateway. A kubernetes Service defines the Load Balancer and associates it with the IngressController/Istio Ingress Gateway. With this scheme, the Load Balancer will always dynamically reconfigure based on changes to the IngressController/Istio Ingress Gateway Pods.

DNS and decision making

In my experience, a combination of wildcard DNS and API automation of DNS entries is used to manage resolution for workloads coming in to the cluster. The LoadBalancer Service configures the load balancer to pass all traffic on ports 80 and 443 through to the IngressController/Istio Ingress Gateway. In this sense it becomes a dumb LoadBalancer which leaves all decisions about what to do with a request to the IngressController/Istio Ingress Gateway.

Proxy technologies

There are many implementations of the IngressController spec for the traditional kubernetes routing, such as nginx, haproxy and traefik, to name a few. These come with various features (e.g. Let’s Encrypt integration), but all of them satisfy the specification that requires them to be aware of all Ingress resources and route traffic accordingly.

The Istio Ingress Gateway exclusively uses Envoy. Whether this lack of choice is a problem for you will depend on your specific use cases, but Envoy is a solid, very fast proxy that is battle tested by some of the biggest sites in the world.

Automatic (re)configuration

Both approaches implement a type of server-side Service Discovery pattern. The proxy monitors kubernetes resources and configures and reconfigures itself. The diagram below illustrates how this works.

Although the Istio ingress mechanism is more complicated with three possible kubernetes resources contributing to the Envoy configuration, the overall approach is almost identical. A mechanism in the ingress proxy observes changes to either the Ingress or Gateway, VirtualService and DestinationRule resources. When changes are observed relative to the current configuration, the configuration is updated. Each of the numbered steps above are continuous.

TLS

Both solutions accommodate TLS certificates at two levels. The first level is at the IngressController (at least this is true with nginx) and Istio Ingress Gateway. The second level is with the IngressController or Gateway. Both solutions make use of a kubernetes Secret to store the TLS certificate and key. However, they work differently in practice, so I’ll provide a description for each solution below.

IngressController (nginx)

The nginx IngressController defines a base/default TLS certificate for all Ingress resources that don’t define their own. If a TLS certificate is not provided, a fake is used. Some IngressController implementations also integrate with Let’s Encrypt. In my case I created a custom Let’s Encrypt automation for kubernetes which could work with any Ingress Controller, or even Istio Ingress Controller.

The Ingress resource can override the default TLS certificate by referencing an a different kubernetes Secret. When this happens, the Ingress specific Secret is mounted into the IngressController and added to the configuration for that route.

Istio Ingress Gateway

The Istio Ingress Gateway can also consumes secrets in two different ways. Both approaches require that the Secret with the TLS certificate must exist in the same namespace that hosts the Istio Ingress Gateway. Unlike the IngressController, there is no way to define a default TLS certificate to use. The certificate must be defined in every Gateway resource.

File Mount TLS certificate

The first TLS approach supported by Istio Ingress Gateway is based on a naming and path convention. Full details about the file mount TLS approach explain that a Secret named istio-ingressgateway-certs will be mounted to paths /etc/istio/ingressgateway-certs/tls.crt and /etc/istio/ingressgateway-certs/tls.key. Gateway resources can reference these paths directly.

Secret Discovery Service

The second approach is to install the Secret Discovery Service (SDS). A Secret can then be added to the same namespace where the Istio Ingress Gateway is hosted and the Secret can be referenced directly as a credentialName parameter in the Gateway definition.

Kubernetes Service used only for endpoints

When using an IngressController, traffic is routed to a Service, which load balances traffic across available Pods. In the case of the Istio Ingress Gateway, the kubernetes Service is only used to get a list of endpoints (Pods). Requests are then send directly to the Envoy proxy in the Pod, bypassing the Service.

Accessing External Workloads

Another important difference between Istio and traditional Kubernetes is in how traffic routes from a Kubernetes workload to an external workload, such as a database or API. Istio routes this egress traffic through the same sidecar Envoy proxy. The following diagram illustrates this.

As shown above, the green arrow indicates external traffic that goes through the proxy, rather than directly to the external workload. The default is to allow all egress, but Istio can be configured to require an explicit ServiceEntry to access external workloads.

Thank you Daniel, this was very helpful.

Hi Daniel,

This is the only article that has help me to finally understand the relation between Ingress and Service and Istio

I’m glad it was useful. It was challenging for me too, which is why I wanted to capture my thoughts.

Extremely useful post. Thanks! However I couldnt quite follow what you said about TLS at two levels.

Another way to say it is that you can define a TLS certificate (probably a wildcard) that will apply to all traffic to your cluster. This is at the gateway level. The second is that for each ingress a unique TLS cert can be defined. If you are using a global default cert, this second level could override it. This would also be required if the same gateway was accommodating multiple domain names and a global wildcard domain wouldn’t work.